Terra Master F2-210 NAS Review

Testing Methodology

| System Configuration | |

| Case | Open Test Table |

| CPU | Intel Core i7 9700K |

| Motherboard | EVGA Z390 FTW |

| Ram | (2) 8GB Corsair DDR4-3200 CMW16GX4M2C3200C16 |

| GPU | MSI RTX 2080 SUPER GAMING X TRIO |

| Hard Drives | Corsair Force MP510 NVMe Gen 3 x4 M.2 SSD (480Gb) |

| Network Cards | Dual Port Intel Pro/1000 PT

Mellanox Connect X-2 PCI-Express x 8 10GbE Ethernet Network Server Adapter |

| Switches | MikroTik Cloud Router Switch CRS317-1G-16S+RM (SwitchOS) Version 2.9

Transceivers used: 10Gtek for Cisco Compatible GLC-T/SFP-GE-T Gigabit RJ45 Copper SFP Transceiver Module, 1000Base-T 10Gtek for Cisco SFP-10G-SR, 10Gb/s SFP+ Transceiver module, 10GBASE-SR, MMF, 850nm, 300-meter 10Gtek for Cisco SFP-10G-T-S 10GBase-T SFP+ 10 Gigabit RJ45 Copper Transceiver 30m |

| Power Supply | Thermal Take Tough Power RGB 80 Plus Gold 750W |

2 Western Digital RED 8 TB 5400 RPM desktop drives were installed and used in the F2-210 NAS tests.

A dual-port Intel network card was installed in the test system.

F2-210 was used with Raid 0 and Raid 1 configurations.

Network Layout

For all tests, the NAS was configured to use a single network interface. The network card was used to test 1Gbps connections. For 1Gbps connection one CAT 6 cable was connected to the MikroTik CRS317-1G-16S+RM from the NAS and one CAT 6 cable was connected to the workstation from the switch. Testing was done on the PC with only 1 network card active. The switch was cleared of any configuration.

Note: I wasn’t able to find any options to set MTU on the NAS itself. Default 1500 MTU settings were used on the NAS interface.

Network drivers used on the workstation are 5.50.14643.1 by Mellanox Technologies. (Driver Date 8/26/2018) (10GbE adapter) and 9.15.11.0 by Intel (Driver Date 10/14/2011)

Software

All testing is done based on a single client accessing the NAS.

Crystal Disk Mark is an old favorite disk benchmarking software which we have used for many years. It provides us with useful information on reading and writes speeds of the targets. You can get your own free copy right here.

ATTO Disk Benchmark gives good insights on the read and writes speeds of the drive. In our tests, we used it against the “share” on the NAS. ATTO Disk Benchmark could be download right here.

Anvil Storage Utilities is a comprehensive storage testing program that provides plenty of information and option for each test. Anvil Storage Utilities could be download right here.

NAS Performance Tester is a free utility benchmark the read and write performance in megabytes per second of network-attached storage connected through SMB/CIFS network shares. Get your own copy here.

All tests were run a total of three times then averaged to get the final result.

With only 2 drives, you are limited to RAID 0, 1, and 10. RAID 0 and RAID 1 were tested for 1GbE and 10GbE connections.

Tests were run after all the RAID arrays were fully synchronized.

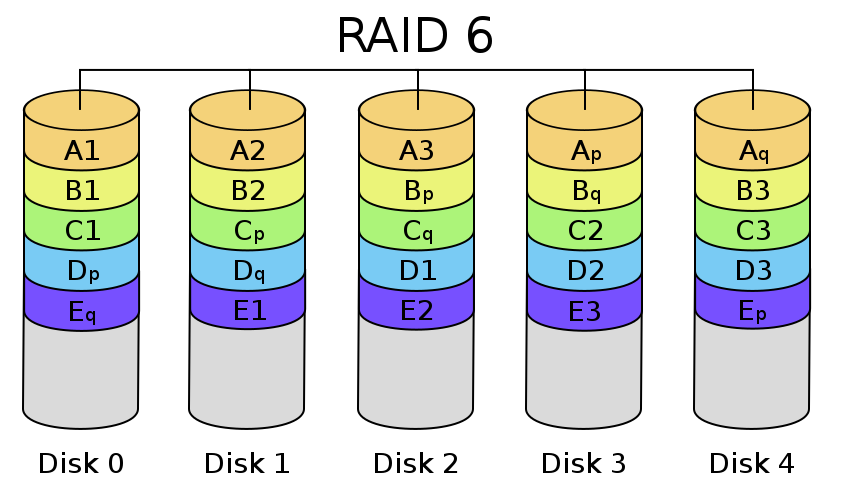

RAID Information

|

|

|

|

|

|

| Images courtesy of Wikipedia | ||

JOBD or Just a Bunch Of Disks is exactly what the name describes. The hard drives have no actual raid functionality and are spanned at random data is written at random.

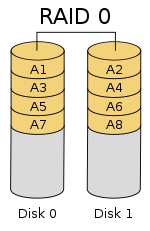

RAID 0 is a stripe set and data is written across the disks evenly. The advantage of RAID 0 is speed and increased capacity. With RAID 0 there is no redundancy and data loss is very possible.

RAID 1 is a mirrored set and data is mirrored from one drive to another. The advantage of RAID 1 is data redundancy as each piece of data is written to both disks. The disadvantage of RAID 1 is The write speed is decreased as compared to RAID 0 due to the write operation is performed on both disks. RAID 1 capacity is that of the smallest disk.

RAID 10 combines the 1st two raid levels and is a mirror of a stripe set. This allows for better speed of a RAID 0 array but the data integrity of a RAID 1 array.

For a full breakdown of RAID levels, take a look at the Wikipedia article here.

RAID configurations are a highly debated topic. RAID has been around for a very long time. Hard drives have changed, but the technology behind RAID really hasn’t. So what may have been considered ideal a few years ago may not be ideal today. If you are solely relying on multiple hard drives as a safety measure to prevent data loss, you are in for a disaster. Ideally, you will use a multi-drive array for an increase in speed and lower access times and have a backup of your data elsewhere. I have seen arrays with hot spares that had multiple drives fail and the data was gone.

Great post. In motherboard is possible solder two more RAM chips??

Is it possible? Of course. Humanly, probably not.

I strongly recommend to NOT to solder anything to this board yourself.

I bought this device in May 2020. It worked out of the box in RAID1 configuration, but it was unable to put disks in spindown. I really wanted to have an always on device, but I did not want to burn 26W and spin the disks when idle. This disk consumes ~1A from 12V (~12W) when disks are spinning and ~0.4A (~5W) when disks are stopped. It has latest TOS 4.1.24, which actually is OpenWRT, with a javascript web interface written on top of it.

The reason why the disks were not stopping was that the entire OS is residing on the disks and *surprise* it is configured to write information and error logs into a disk file. Because the designers forgot to disable log, it spins the disks every time one logs in, opens user interface and also renews IP address from your home DHCP server. If this happens within 30min, the disks never stop. They also have few bugs in configuration so the error messages which sometime pop up from Samba or IPtables happen to spin up disks too.

When using DHCP server at home, set the lease time to long interval because NAS will refresh lease half-way in the validity time (1hr lease will spin the disks permanently because the minimum timeout is 30min when set from browser interface).

I may only guess that the designers put the internal flash drive with the intent to place logs and other frequently accessed information on this drive; it is mapped as /mnt/usb disk and shows 120MB of free space. But because the mount point is on HDD, the access to flash also spins up the disks!

The disks will be also woken by the “Apps” which may be optionally installed, like Google Drive Sync and DLNA server. These applications are rather handy, and installation is easy. Needless to say, it can be a full featured Web (multiple virtual servers), FTP, NFS, SVN, Git, Docker and few more servers. It is a real decent performance Linux machine. But be prepared that the disks may/will be spinning all the time.

When fresh HDDs are installed, (I used Seagate IronWolf 4TB), it takes 24-40hours to build the RAID journal. During this time the box will produce head move sounds continuously, this is unexpected, but normal.

It is possible to log on the TOS via SSH terminal and also browse, copy and edit files from a PC using WinSCP utility. This way one familiar with Linux internals will be able to tweak the configuration files to modify the low level configuration. After two days of studying, I managed to configure it in staying in mostly low power state when idle.

There is a debate if the NAS disks are more reliable when permanently spinning, but when nobody accesses the box at night, the standby low power and low noise mode is very desirable.

In summary, the box is solid, flexible, and has good price/performance, but requires some work to improve their TOS configuration and fix the bugs.