Nvidia GeForce RTX 2080TI Founders Edition & RTX 2080 Founders Edition GPU Review

Turing up to 11

What’s in a name? Turing, more specifically Alan Turing was an English computer scientist, mathematician, cryptanalyst, and held a few other titles. Alan Turing developed the Turing Test. In short, the Turing test is designed to test a computers ability to pretend to be human. To pass the test, the computer (or program) must fool a judge into thinking they are interacting with a real human. This is just a small part of what is called A.I. or Artificial Intelligence. One of the features of Nvidia’s latest GPU features acceleration of A.I. based feautres in the form on Tensor cores. Hence, the name Turing or so I would imagine.

What’s in a name? Turing, more specifically Alan Turing was an English computer scientist, mathematician, cryptanalyst, and held a few other titles. Alan Turing developed the Turing Test. In short, the Turing test is designed to test a computers ability to pretend to be human. To pass the test, the computer (or program) must fool a judge into thinking they are interacting with a real human. This is just a small part of what is called A.I. or Artificial Intelligence. One of the features of Nvidia’s latest GPU features acceleration of A.I. based feautres in the form on Tensor cores. Hence, the name Turing or so I would imagine.

It has been just over two years since Nvidia launched a new GPU. Pascal was launched in May of 2016 and now it is time for Nvidia to launch their latest creation; Turing.

The Turing 102 GPU (RTX 2080TI) features six Graphics Processing Clusters (GPC), 36 Texture Processing Clusters (TPC) and 72 streaming processors(SM). Each SM contains 64 CUDA cores, 8 Tensor Cores, a 256 KB register file, four texture units and 96 KB of L1 shared memory. In grand total, this gives the RTX 2080TI the following:

4608 CUDA Cores

72 RT Cores

576 Tensor Cores

288 Texture units

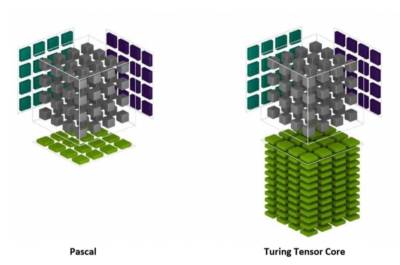

When you compare Pascal to Tensor there is a significant difference between the two.

| GPU Features | GTX 1080Ti | RTX 2080 Ti |

| Architecture | Pascal | Turing |

| GPCs | 6 | 6 |

| TPCs | 28 | 34 |

| SMs | 28 | 68 |

| CUDA Cores / SM | 128 | 64 |

| CUDA Cores / GPU | 3584 | 4352 |

| Tensor Cores / SM | NA | 8 |

| Tensor Cores / GPU | NA | 544 |

| RT Cores | NA | 68 |

Nvidia has further refined their parallel processing within each SM. The Turing SM is partitioned into four processing blocks, each with 16 FP32 Cores, 16 INT32 Cores, two Tensor Cores, one warp scheduler, and one dispatch unit. Turing implements a major revamping of the core execution datapaths. Modern shader workloads typically have a mix of FP arithmetic instructions such as FADD or FMAD with simpler instructions such as integer adds for addressing and fetching data, floating point compare or min/max for processing results, etc. In previous shader architectures, the floating-point math datapath sits idle whenever one of these non-FP-math instructions runs. Turing adds a second parallel execution unit next to every CUDA core that executes these instructions in parallel with floating point math.

Tensor cores bring the AI functionality to Turing. Originally Tensor Cores were introduced in the Volta VG100 GPU. The Tensor Cores accelerate the features of Nvidia’s NGX neural services for graphics enhancements and rendering. One such example which, will be tested later in this review is DLSS or Deep Learning Super Sampling. DLSS is a new way to do super sampling that gives the same visual fidelity as Temporal Anti-Aliasing (TAA) but, with less of a performance hit.

Ray tracing – as proclaimed and the Holy Grail of rendering realistic computer graphics. Ray tracing is a rendering technology that realistically simulates the lighting of a scene and its object. Turing GPUs can do this in real-time. Rasterization has been used for years and it is used to day in games. Nvidia uses a combination of Rasterization where needed and ray tracing where it provides the most visual benefit. This is called Hybrid rendering and utilizes the RT cores in each SM. At the time of this review, DirectX Ray Tracing and Windows ML are not available and will be included in the October 2018 Windows update. However, it is included when developer mode is enabled.

Turing is much more than just the few technology highlights I’ve mentioned here. As the drivers mature and software becomes available we will be revisiting Turing testing in those specific areas. Since the launch of Turing, the internet has been ablaze with speculation of how it will perform in games available today; games that you are playing right now.

Packaging

Nvidia sticks true to form with their black/green coloring scheme. Inside these heavy duty cardboard boxes sit the latest entry from team green.

After sliding the top off of the boxes the RTX cards are proudly displayed. Each card is wrapped in a protective film to keep the finish looking sharp. Included in the kit are a quick users guide, a warranty pamphlet, and a DisplayPort to DVI Adapter.